Dive Brief:

-

Clinical trials of invasive cardiovascular interventions are often “relatively small and fragile” and have “limited power to detect large treatment effects,” authors of a study published in JAMA Internal Medicine on Monday.

-

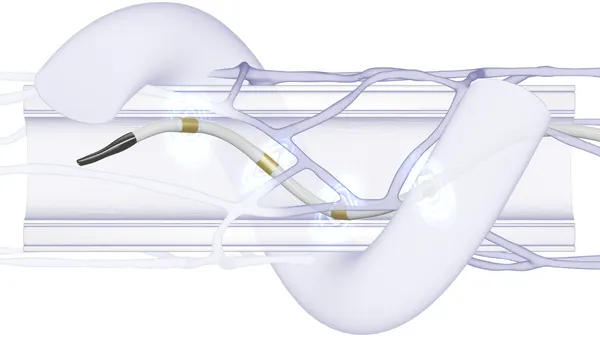

Researchers looked at 216 clinical trials from the last decade or so, more than half of which involved percutaneous coronary intervention, to show how modern studies are designed and reported. In doing so, they raised concerns about the robustness of clinical trial results.

-

Some of the concerns center on the influence of commercial sponsors, which were involved in more than half of the trials. Presentations of data gathered in commercially funded trials were more likely to feature misleading spin, although industry involvement was also associated with many good practices, researchers said.

Dive Insight:

In addition to supporting FDA approvals and influencing reimbursement, randomized clinical trials influence treatment by shaping physician practices and the recommendations of professional bodies. However, some are more likely to generate reliable, generalizable results than others and scope exists for results to be reported misleadingly, researchers outlined in a JAMA Internal Medicine investigation published this week.

Those factors led cardiovascular surgeons and other medical experts working across North America and Europe to collaborate on an analysis of the design, outcomes and reporting of clinical trials involving coronary, vascular and structural interventional cardiology, and vascular and cardiac surgical procedures that read out between 2008 and 2019. The analysis highlighted potential weaknesses in the evidence that informs treatment decisions.

One concern relates to the robustness of positive results. Researchers showed that in many cases changing the outcomes of five patients would be enough to render a positive result negative, leading researchers to conclude the studies “were not particularly robust.”

“This finding is concerning given the substantial role that [clinical trial] results play in federal device approvals, payer criteria and clinical consensus guidelines,” the authors wrote. “A case can be made for specifying the number of patients needed to change the statistical significance of the outcomes in the primary reports of these trials.”

By that measure of robustness, commercial and noncommercial clinical trials were comparable. The analysis identified specific concerns linked to commercial sponsorship in other areas, though.

Commercial sponsorship was associated with results that favored the intervention being tested, but it is unclear why. Industry trials used similar designs to noncommercial studies, although the authors think their analysis may have failed to capture more subtle differences.

Involvement of commercial sponsors was also linked to more distortion or misrepresentation of the data, such as the framing of an intervention as effective despite a trial missing its primary endpoint. According to the researchers, 81% of results from the analyzed commercially sponsored trials were presented with some sort of spin, as compared to 54% of noncommercial studies.

Those negative aspects of commercially sponsored clinical trials were offset by other factors, such as the the fact the analysis found industry studies were larger, more inclusive and more likely to feature multiple sites. Such elements are associated with more robust, generalizable results.

Overall the authors used the findings to call for changes to clinical trial designs and consensus guidelines.

"Given that it may not be feasible to consistently fund and design invasive interventional trials with more robust results, a case can be made for specifying the number of patients needed to change the statistical significance of the outcomes in the primary reports of these trials as well as in the meta-analyses and consensus guidelines that cite them."